Introduction

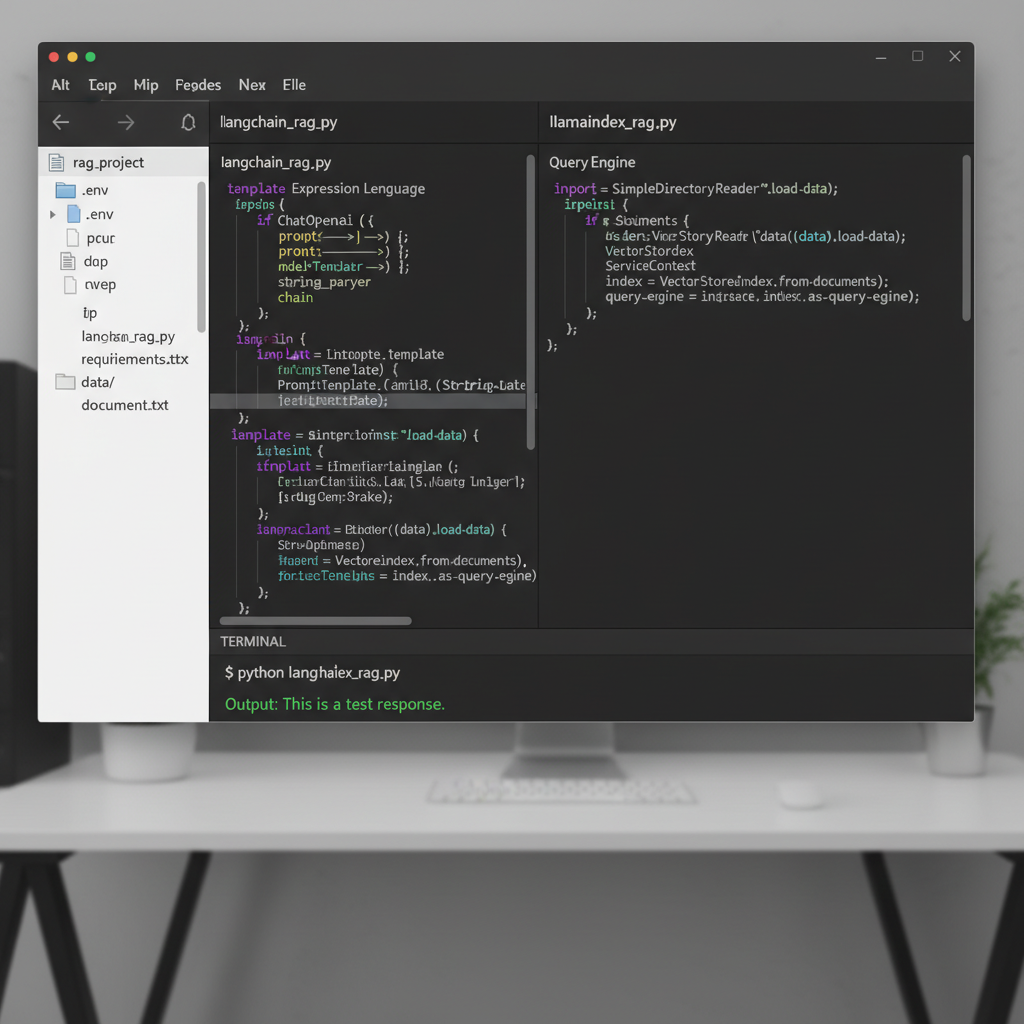

In 2026, building a Retrieval-Augmented Generation (RAG) system is no longer about just connecting a PDF to an LLM. It’s about sophisticated data orchestration, agentic reasoning, and maintaining low latency at scale. When deciding between langchain vs llamaindex for rag, the choice often boils down to your specific architecture: do you need a general-purpose Swiss Army knife or a high-performance scalpel for data retrieval?

I’ve spent the last year building production RAG pipelines using both frameworks. While the lines between them have blurred as both ecosystems matured, they still maintain distinct philosophies. LangChain has doubled down on being the “everything framework” for agents, while LlamaIndex has remained the gold standard for deep data indexing and retrieval optimization. If you are just starting out, you might want to check my retrieval augmented generation tutorial step by step to get the basics down before diving into framework specifics.

LangChain: The Versatile Multi-Agent Orchestrator

LangChain is the most popular framework in the AI space for a reason. It provides a massive ecosystem of integrations and a highly modular way to build complex LLM applications. In my experience, its greatest strength is not the RAG itself, but the orchestration surrounding it.

Core Features

- LangGraph: A powerful library for building stateful, multi-agent systems with cycles, which is essential for complex RAG loops.

- LCEL (LangChain Expression Language): A declarative way to chain components together with built-in support for streaming and async.

- Ecosystem: Over 700+ integrations with vector stores, tools, and models.

Pros of LangChain

- Unmatched flexibility for building complex, non-linear agent workflows.

- Excellent observability through LangSmith for debugging deep chains.

- Huge community support and extensive documentation for almost any use case.

- Seamless integration with LangGraph for multi-agent systems.

Cons of LangChain

- Verbosity: Simple tasks often require more boilerplate code than in LlamaIndex.

- Abstraction Overhead: The “Chains” can sometimes feel like a black box when something goes wrong.

- Rapid API Changes: The fast-moving nature of the project can lead to frequent breaking changes.

LlamaIndex: The Data-First RAG Specialist

If LangChain is about the “logic” of the application, LlamaIndex is about the “data.” Formerly known as GPT Index, it was built specifically to solve the problem of connecting large datasets to LLMs. For high-performance RAG, LlamaIndex often provides superior out-of-the-box results.

Core Features

- Data Connectors (LlamaHub): Specialized loaders for everything from Notion and Slack to obscure database formats.

- Advanced Indexing: Built-in support for hierarchical indices, summary indices, and knowledge graphs.

- Workflows: Their latest event-driven orchestration layer that rivals LangGraph for RAG-specific tasks.

Pros of LlamaIndex

- Ease of Use: You can get a high-quality RAG pipeline running in 5-10 lines of code.

- Retrieval Quality: Optimized strategies like sentence-window retrieval and auto-merging retrieval are built-in.

- Efficiency: Generally lower latency for data-heavy operations.

- Deep focus on vector database selection and optimization.

Cons of LlamaIndex

- Less flexible than LangChain for general-purpose application logic (e.g., non-RAG features).

- Smaller ecosystem of third-party tool integrations compared to LangChain.

- Documentation can sometimes be less comprehensive for non-core features.

Feature Comparison: LangChain vs LlamaIndex

To help you visualize the differences, here is how they stack up across the key pillars of RAG development in 2026.

| Feature | LangChain | LlamaIndex |

|---|---|---|

| Primary Focus | General LLM Orchestration & Agents | Data Retrieval & Indexing | Learning Curve | Moderate to Steep (due to LCEL) | Low (for RAG) / Moderate (for Workflows) | Data Loading | Broad, community-driven loaders | Deep, specialized LlamaHub connectors | Agent Support | Elite (LangGraph) | Excellent (Workflows) | Production Tooling | LangSmith / LangServe | LlamaCloud / LlamaParse |

Pricing and Open Source

Both frameworks are open-source (MIT license) and free to use for local development. However, their monetization strategies differ as you scale to production:

- LangChain: Primarily monetizes via LangSmith (observability/testing) and LangServe. Pricing is tiered based on the number of traces/logs processed.

- LlamaIndex: Monetizes through LlamaCloud and LlamaParse (an elite document parsing service). LlamaParse is particularly useful for complex PDFs and images, often operating on a per-page credit model.

Best Use Cases: Which One Should You Use?

In my experience, the “winner” depends on your project goals:

Choose LangChain if…

- You are building a complex agent that needs to interact with many different APIs and tools.

- You need fine-grained control over the execution flow (e.g., custom state machines).

- Your app has many non-RAG features like chat history management or complex UI branching.

Choose LlamaIndex if…

- Your main challenge is indexing and searching through massive, messy datasets (PDFs, Excel, slide decks).

- You want the highest possible retrieval accuracy with minimal configuration.

- You are building a “Chat with your Data” application where query performance is the top priority.

My Verdict

I frequently use a hybrid approach. In 2026, the best developers often use LlamaIndex for the heavy lifting of data ingestion and retrieval (RAG) and then wrap that inside a LangGraph agent for complex conversational logic. If I had to pick just one for a pure RAG project today, LlamaIndex wins on developer velocity and retrieval quality. However, for a full-scale AI platform, LangChain’s ecosystem is still hard to beat.