Introduction: The State of RAG in 2026

In 2026, building a production-grade LLM application almost always boils down to one pivotal decision: LangChain vs LlamaIndex for RAG. While both frameworks have evolved significantly over the last few years, the choice between them isn’t as simple as it used to be. I’ve spent the last six months migrating several enterprise-level projects between these two ecosystems, and the nuances are where the real winners are decided.

Retrieval-Augmented Generation (RAG) has moved beyond simple PDF parsing. Today, we’re talking about multi-modal ingestion, agentic reasoning, and sub-second latency. If you’re still getting your bearings, you might want to start with my retrieval augmented generation tutorial step by step to understand the fundamentals before diving into this architectural comparison.

Option A: LangChain – The Swiss Army Knife of AI

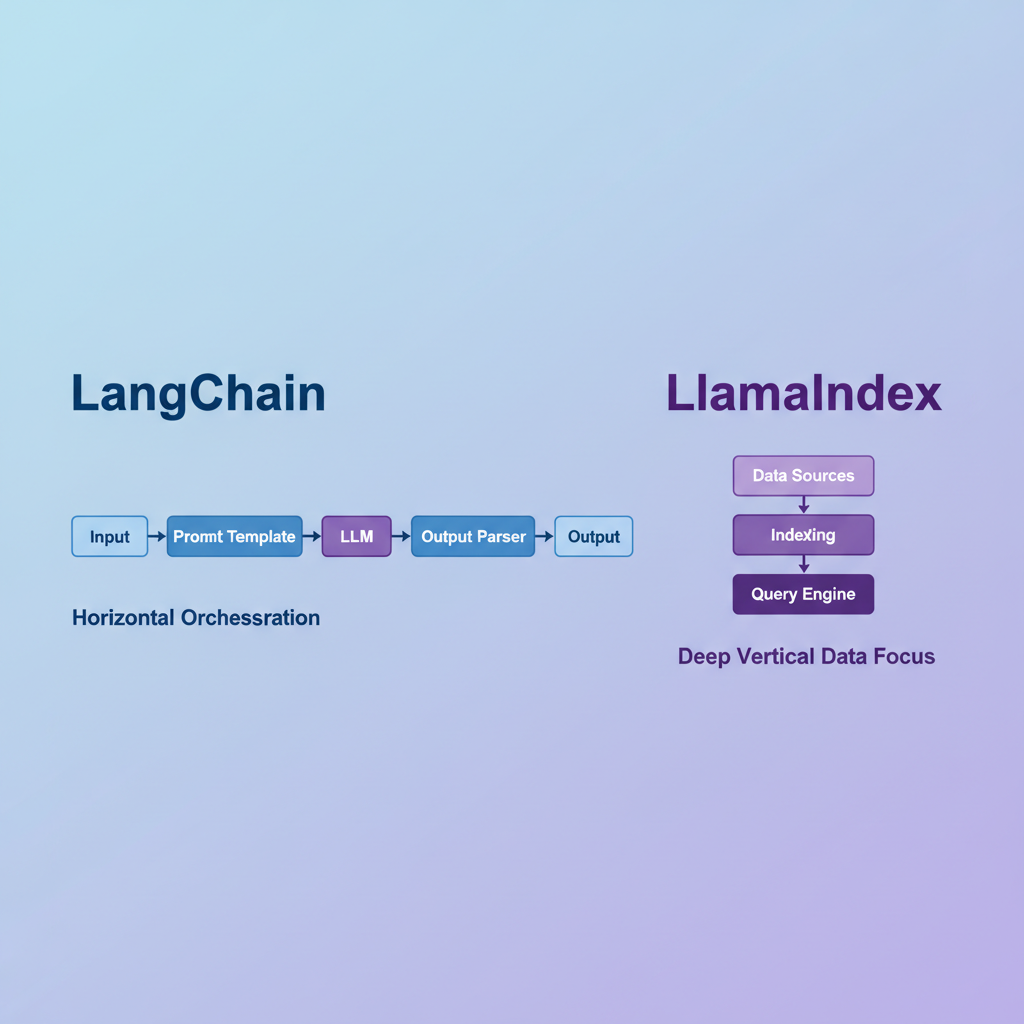

LangChain remains the most popular framework because of its sheer versatility. It isn’t just a RAG tool; it is an orchestration layer for any AI behavior you can imagine. In my experience, LangChain is the better choice when your RAG pipeline is just one small part of a larger, complex system.

Core Features

- Modular Components: Chains, prompts, and memory are all swappable.

- LangGraph Integration: This is the crown jewel for 2026. For complex, stateful loops, check out my langgraph tutorial for multi-agent systems.

- Massive Ecosystem: If a new vector database or LLM model comes out, LangChain usually has an integration within 24 hours.

Pros and Cons

Pros: Unrivaled flexibility; powerful agentic capabilities; massive community support; excellent debugging via LangSmith.

Cons: Steep learning curve; the “LangChain Expression Language” (LCEL) can be verbose; high abstraction can sometimes make it harder to optimize low-level retrieval logic.

Option B: LlamaIndex – The Precision Tool for Data

While LangChain tries to do everything, LlamaIndex focuses on doing one thing exceptionally well: connecting LLMs to your data. If your primary challenge is messy data, complex indexing, or massive document stores, LlamaIndex is usually my go-to recommendation.

Core Features

- Data Connectors (LlamaHub): Over 100+ loaders for everything from Notion to Slack to S3.

- Advanced Indexing: Features like recursive retrieval and small-to-big windowing are baked into the core library.

- Engine Focus: It treats RAG as a first-class citizen, offering Query Engines and Chat Engines out of the box.

Pros and Cons

Pros: Faster to implement for data-heavy RAG; superior out-of-the-box retrieval performance; simpler API for standard use cases; deep focus on vector database selection and optimization.

Cons: Less flexible for general-purpose AI tasks (like non-RAG agents); smaller ecosystem compared to LangChain; can feel restrictive if you want to break away from their predefined query patterns.

Feature Comparison Table

Here is how the two frameworks stack up in a head-to-head comparison for a standard RAG implementation:

| Feature | LangChain | LlamaIndex |

|---|---|---|

| Primary Focus | General AI Orchestration | Data Indexing & Retrieval |

| Learning Curve | High (Steep) | Moderate |

| Agent Support | Extensive (LangGraph) | Good (Workflows) |

| Data Ingestion | Manual/Standard | Automated/Sophisticated |

| Community Size | Huge | Large & Growing |

Pricing and Open Source Context

Both frameworks are open-source (MIT License) and can be used for free in your local projects. However, they both offer commercial clouds that you’ll likely need for production monitoring and scale:

- LangSmith (LangChain): Essential for tracing and debugging complex chains. Pricing is tier-based based on traces per month.

- LlamaCloud (LlamaIndex): Focuses on managed data parsing and indexing pipelines. It is excellent if you don’t want to build your own ETL for RAG.

When to Use Which?

Choose LangChain If:

- You are building a multi-agent system where RAG is just one tool.

- You need absolute control over the logic and state of your application.

- You already have a heavy investment in the LangChain ecosystem.

Choose LlamaIndex If:

- Your project’s success depends on the accuracy of retrieval from messy, unstructured data.

- You want a RAG system up and running in a few hours rather than days.

- You are dealing with massive scale (millions of documents) where indexing strategy is the bottleneck.

My Verdict

In my experience, LlamaIndex wins for pure RAG. The developers have spent years perfecting the “retrieval” part of RAG, making it more performant and easier to tune. However, if your application needs to do more than just answer questions from a PDF—if it needs to perform actions, interact with external APIs, and maintain complex states—then LangChain (with LangGraph) is the superior choice for building the next generation of AI agents.

As shown in the architecture comparison diagram below, the two frameworks are increasingly converging, but their philosophical roots remain distinct.