The Great AI Divide: Open Source vs Closed Source LLMs for Business

Choosing the right Large Language Model (LLM) is no longer just a developer’s hobby; it is a core strategic decision for the modern enterprise. In my experience consulting with startups and established firms over the last two years, the debate of open source vs closed source LLMs for business has shifted from ‘which is smarter’ to ‘which is more sustainable and secure.’

Closed source models like GPT-4o, Claude 3.5, and Gemini Pro offer unparalleled ‘out-of-the-box’ power. Conversely, the rise of open-weight models like Llama 3.1, Mistral, and Falcon has given businesses the ability to own their intelligence stack entirely. As we navigate the landscape in 2026, the gap in reasoning capabilities has narrowed significantly, making the decision more about infrastructure, privacy, and long-term cost.

Option A: Closed Source LLMs (Proprietary Giants)

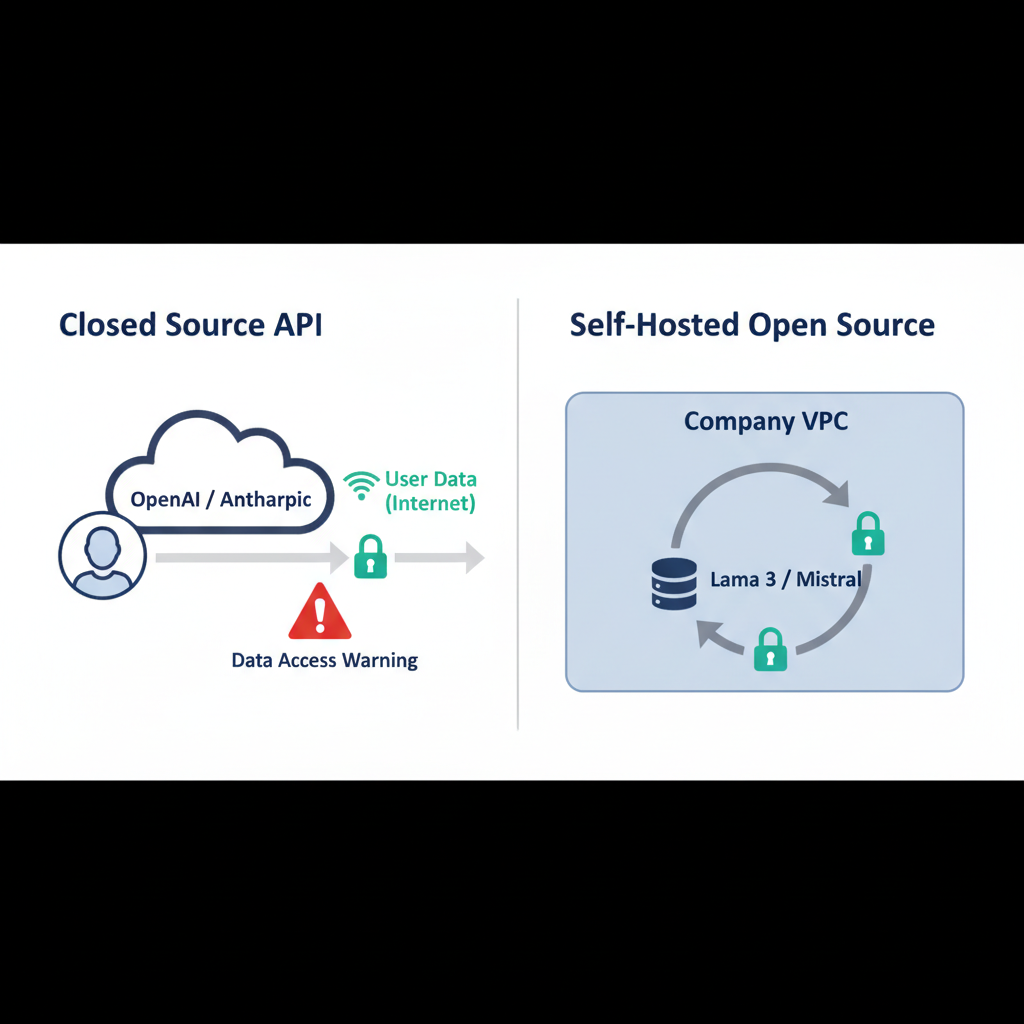

Closed source LLMs are owned and operated by companies like OpenAI, Anthropic, and Google. You access them via an API, paying for what you use.

Key Features

- State-of-the-Art (SOTA) Performance: Historically, closed models have led the benchmarks in complex reasoning and multilingual support.

- Managed Infrastructure: No need to worry about H100 clusters or CUDA drivers; the provider handles the scaling.

- Rapid Innovation: Features like large context windows (200k+) usually debut here first.

Pros and Cons

Pros: Immediate setup, comprehensive documentation, and professional support tiers. It’s the fastest way to get an MVP to market.

Cons: High recurring costs at scale, potential for ‘model drift,’ and inherent privacy risks. When you send data to an API, you are trusting a third party with your intellectual property. For developers concerned about this, I’ve written extensively on ai security best practices for developers to help mitigate these risks.

Option B: Open Source LLMs (The Freedom of Weights)

Open source (or more accurately, ‘open weights’) models allow you to download the model parameters and run them on your own hardware or VPC.

Key Features

- Full Data Sovereignty: Your data never leaves your VPC. This is the gold standard for healthcare or fintech.

- Fine-Tuning Potential: You can deeply customize the model on your proprietary codebase or documentation.

- Predictable Costs: Once you cover the compute, your marginal cost per token is nearly zero.

Pros and Cons

Pros: No vendor lock-in, enhanced privacy, and the ability to run offline. If you’re asking, should i use open source llms for privacy, the answer is usually a resounding yes.

Cons: Requires specialized DevOps knowledge to maintain. You also bear the upfront cost of hardware or reserved cloud GPU instances.

Open Source vs Closed Source LLMs for Business: The Ultimate Comparison

Here is how the two stacks stack up against each other in a typical business environment:

| Feature | Closed Source (e.g., GPT-4o) | Open Source (e.g., Llama 3.1) |

|---|---|---|

| Setup Speed | Minutes (API Key) | Hours/Days (Inference Server) |

| Data Privacy | Provider-dependent | Absolute (Local/VPC) |

| Customization | Basic (Fine-tuning APIs) | Full (PEFT, LoRA, Full Finetune) |

| Cost Scaling | Linear (More usage = more $) | Fixed (Hardware/Server costs) |

| Reliability | High (SLA-backed) | Self-managed |

Cost Analysis: API vs. Self-Hosting

In the early stages, closed source is almost always cheaper. You spend $10 on tokens and you’re done. However, once you hit millions of requests per month, the math changes. Running a quantized Llama-70B model on a dedicated A100 instance can be 5x cheaper than GPT-4o at high volumes. For a deeper dive into the numbers, check out my cost analysis of self-hosting llms.

As shown in the architecture diagram below, the complexity of self-hosting requires a robust pipeline for serving and monitoring, which is a hidden cost often overlooked by management.

Which One Should You Choose?

Use Closed Source If:

- You are a small team needing to move fast.

- Your use case requires the absolute highest reasoning capabilities (e.g., complex legal analysis).

- You don’t have a dedicated DevOps or ML-Ops engineer.

Use Open Source If:

- You handle sensitive PII (Personally Identifiable Information).

- You want to build a unique ‘moat’ through optimizing llm inference for your specific industry.

- You have high-volume, low-complexity tasks where API costs would be prohibitive.

My Verdict

In my own projects at ajmani.dev, I use a hybrid approach. I use closed source models for prototyping and complex prompt engineering. Once the task is well-defined and the volume increases, I migrate those specific prompts to a fine-tuned open source model. This gives me the speed of the giants with the privacy and cost-efficiency of open source. For most businesses in 2026, the ‘Hybrid Cloud’ AI model is the winning strategy.