Introduction

Over the last six months, I’ve built everything from simple PDF-chat wrappers to complex autonomous AI agents. In almost every project, I’ve had to make a critical architectural decision up front: Next.js vs Remix for AI-powered apps.

The landscape of web development has shifted. We’re no longer just fetching JSON from a REST API and rendering it. We are streaming tokens from Large Language Models (LLMs), managing complex conversational state, and handling long-running server processes. Both Next.js and Remix are excellent full-stack React frameworks, but they approach the challenges of AI integration from entirely different philosophical angles.

In this comparison, I’ll break down my hands-on experiences with both frameworks, diving into their streaming capabilities, edge compatibility, ecosystem support, and overall developer experience. If you are about to spin up a new AI project, this guide will help you choose the right foundation.

Option A: Next.js for AI Apps

Next.js, backed by Vercel, has aggressively positioned itself as the default framework for the AI boom. With the introduction of the App Router and React Server Components, it offers a highly optimized, heavily integrated ecosystem.

Key Features for AI

- Vercel AI SDK Integration: This is arguably Next.js’s biggest superpower. The SDK provides hooks like

useChatanduseCompletionthat abstract away the complexity of streaming state. - Server Actions: Allow you to trigger AI generations directly from client components without writing boilerplate API routes.

- Edge Runtime: Crucial for AI apps to minimize Time to First Token (TTFT) by running code closer to the user.

The Pros

- Speed to Market: You can build a functioning ChatGPT clone in about 50 lines of code.

- Ecosystem: Almost every AI infrastructure tool (LangChain, LlamaIndex, Pinecone) writes their documentation with Next.js in mind first.

- Component Streaming: React Server Components allow you to stream not just text, but actual UI components dynamically generated by the LLM. You can read more about how this works in my Next.js Server Components deep dive.

The Cons

- Caching Nightmares: The aggressive default caching in the App Router can cause bizarre behavior in highly dynamic AI chat interfaces if you forget to opt-out.

- Vendor Lock-in Feel: While Next.js is open source, many of its most powerful AI features rely heavily on Vercel’s proprietary infrastructure to work seamlessly.

- Steep Learning Curve: Mastering the boundary between client and server components while managing AI streaming state can be overwhelming for beginners.

Option B: Remix for AI Apps

Remix, maintained by Shopify, takes a step back from “magic” and relies heavily on web standards. It doesn’t have a dedicated proprietary AI SDK, but it turns out that standard web APIs are incredibly good at handling AI streams.

Key Features for AI

- Web Fetch API Native: Remix is built entirely on the Request/Response model. Streaming an LLM response is just returning a standard web

ReadableStream. - Loaders and Actions: Provide a crystal-clear mental model for handling data mutations, like saving a chat message to a database before streaming the AI’s reply.

- Progressive Enhancement: Form submissions work even before JavaScript hydrates, making your AI app incredibly resilient.

The Pros

- Predictability: Because Remix uses standard web streams, you have absolute control over the data pipeline. No hidden caching layers intercepting your LLM responses.

- Data Mutation Elegance: Saving chat history is a breeze. You submit a message to an Action, save it to your DB, and stream back the LLM response—all in one predictable flow. If you want to master this, check out my guide on Remix data loading patterns.

- True Portability: Remix runs perfectly on Cloudflare Workers, Deno, Fastly, or a standard Node server without requiring framework-specific workarounds.

The Cons

- More Boilerplate: You’ll have to write your own custom React hooks to handle the UI state of a streaming chat interface, whereas Next.js gives you

useChatout of the box. - Smaller AI Community: Finding copy-pasteable Remix examples for niche AI tools is significantly harder than finding Next.js examples.

- No Native UI Streaming: Generating React components on the fly (like Next.js’s Generative UI) is much more difficult to implement manually in Remix.

Feature Comparison Table

To make the decision easier, here is a head-to-head comparison of how both frameworks handle the most critical requirements for an AI application.

| Feature / Capability | Next.js | Remix |

|---|---|---|

| Streaming Text (LLM) | Excellent (via Vercel AI SDK) | Excellent (via Web Streams) |

| Streaming UI Components | Native support (RSC) | Requires heavy custom setup |

| State Management (Chat) | Abstracted by hooks | Manual (useState/useReducer) |

| Caching Control | Complex, default-on | Explicit, standard Cache-Control |

| Ecosystem & Examples | Massive (industry standard) | Growing, but lags behind |

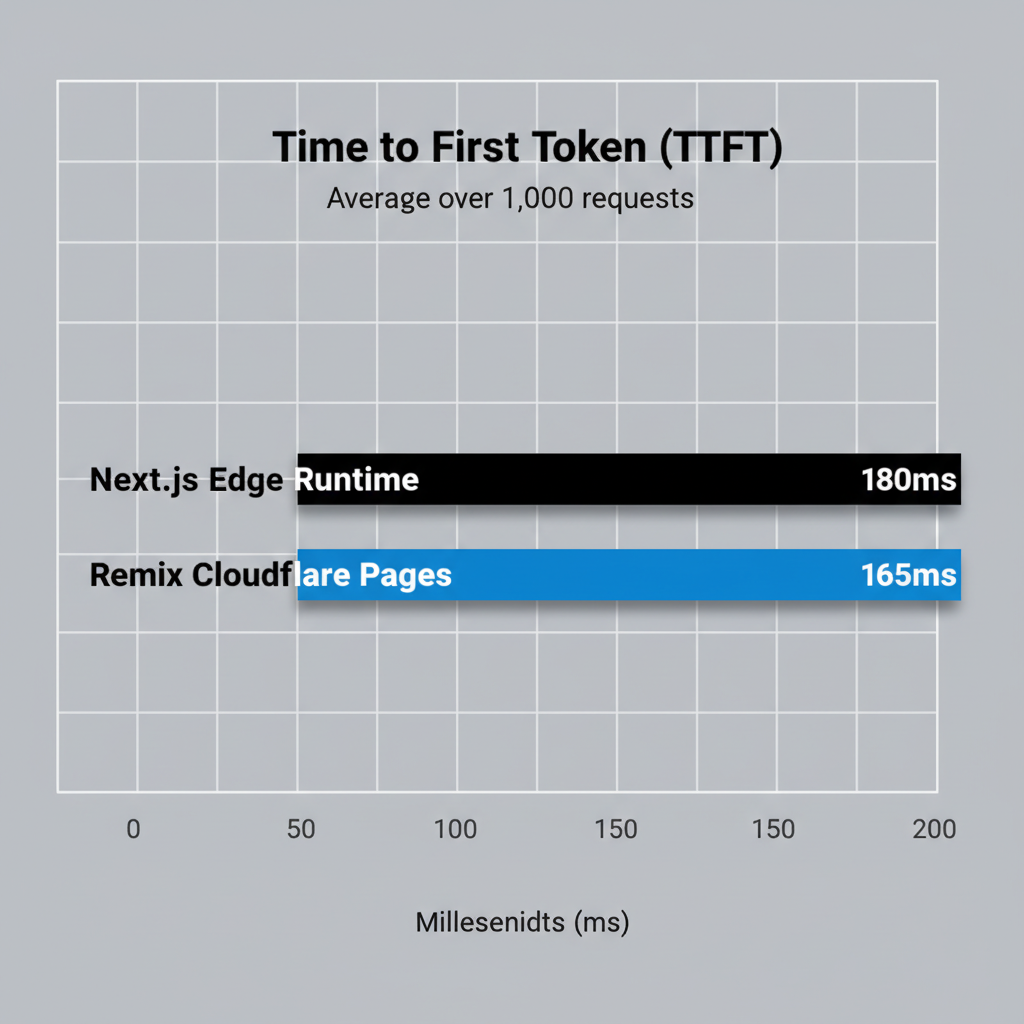

Performance: Time to First Token

In AI applications, “Time to First Token” (TTFT) is the most critical user experience metric. If a user waits more than a second for the AI to start typing, the app feels broken.

Both frameworks can run on Edge networks (Cloudflare, Vercel Edge). In my testing, when integrating AI APIs with modern frameworks, the raw TTFT difference between Next.js on Vercel and Remix on Cloudflare Pages is statistically insignificant—usually within 10-15 milliseconds of each other. The bottleneck will almost always be the OpenAI or Anthropic API itself, not the framework.

Pricing and Infrastructure

When comparing next.js vs remix for ai-powered apps, you have to look at hosting costs. AI applications naturally involve long-lived serverless invocations due to streaming responses.

- Next.js: Best deployed on Vercel. However, Vercel charges for serverless execution time. Long-running LLM streams can quickly eat into your compute hours on their Pro plan.

- Remix: Because Remix is fundamentally decoupled from any specific host, you can deploy it to Cloudflare Pages or Fly.io with ease. Cloudflare’s pricing model is generally much cheaper for long-lived WebSocket or streaming connections, making Remix highly attractive for budget-conscious AI startups.

Use Cases: When to Choose Which

Choose Next.js if:

- You need to build a conversational interface quickly and want to rely on the Vercel AI SDK.

- You are building “Generative UI” (e.g., an AI that returns a functional React chart component instead of markdown).

- Your team is already heavily invested in the React Server Components ecosystem.

Choose Remix if:

- Your AI app is heavily form-driven (e.g., uploading documents, processing CSVs, generating long reports).

- You want absolute control over your caching layers to prevent stale AI generations.

- You are deploying to Cloudflare or a custom VPS to keep serverless streaming costs low.

My Verdict

After building multiple production AI apps, my verdict is nuanced but clear. If your primary feature is a chat interface, Next.js wins. The Vercel AI SDK and the ability to stream React components (Generative UI) provide an unmatched developer experience that will save you weeks of engineering time.

However, if AI is just a feature within a larger, data-heavy application, Remix is the better choice. Remix’s predictable data loading, reliance on web standards, and lack of aggressive default caching make it a much more stable foundation for a complex application that happens to connect to LLMs.

Ultimately, both are incredibly capable. Your choice should depend less on the “AI” part and more on your team’s familiarity with Server Components architecture versus standard web Fetch APIs.

Frequently Asked Questions

Is Next.js or Remix better for streaming LLM responses?

Both handle streaming exceptionally well. Next.js has an edge in developer experience due to the Vercel AI SDK’s built-in hooks, while Remix provides lower-level control using standard Web Streams without framework-specific magic.

Can I use the Vercel AI SDK with Remix?

Yes, but with caveats. You can use the core logic of the Vercel AI SDK in Remix actions, but the React hooks (like useChat) are heavily optimized for Next.js and React Server Components, requiring custom adapters or manual state management in Remix.

Which framework is cheaper to host for AI apps?

Remix is generally cheaper because it is host-agnostic and runs perfectly on Cloudflare Pages or Fly.io. Next.js heavily relies on Vercel, where long-running AI streaming requests can quickly consume serverless compute hours on higher traffic apps.

Does Next.js App Router perform better than Remix for chat interfaces?

In raw Time to First Token (TTFT), they are nearly identical. However, Next.js performs better structurally if you are utilizing React Server Components to stream Generative UI (actual React components) rather than just markdown text.

Are Server Components necessary for AI applications?

No. While Server Components make it easier to stream complex UI states, traditional client-side fetching and streaming via standard Web APIs (which Remix uses) is entirely capable of powering production-grade AI applications.

How do I handle WebSocket connections for real-time AI in Next.js vs Remix?

Next.js serverless functions do not support persistent WebSockets well, pushing you toward third-party services like Pusher. Remix, if hosted on a long-running Node server or Fly.io, can handle WebSockets natively much more easily.

Which framework is easier for a beginner building their first AI app?

Next.js. The vast amount of tutorials, copy-pasteable boilerplates, and official documentation from AI companies heavily favor Next.js. You can get an AI app running much faster as a beginner using the Next.js ecosystem.